Is AI Actually Thinking or Just Guessing? Cracking the Code of AI Logic

The Hidden Flaw in Modern AI: Why Logic Fails

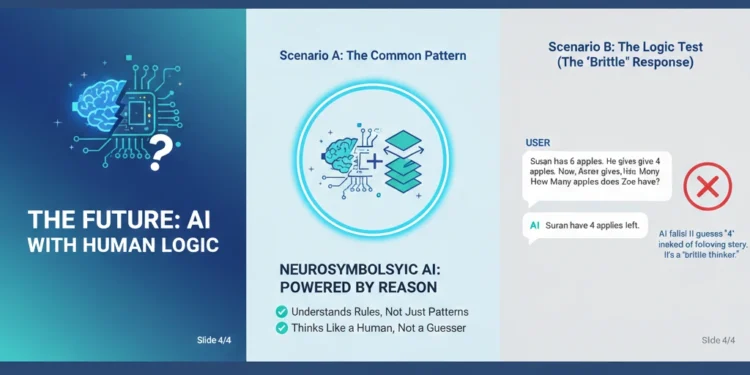

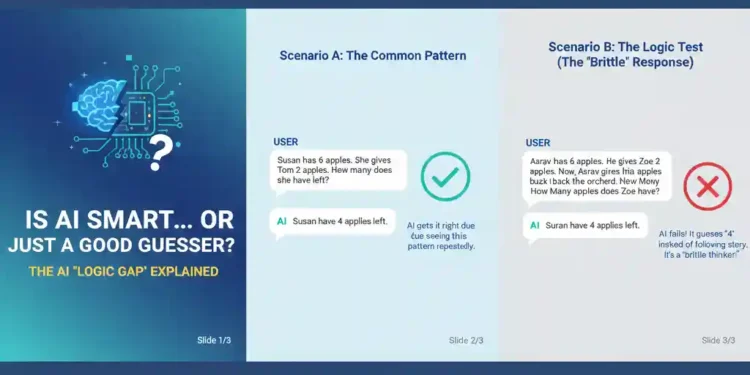

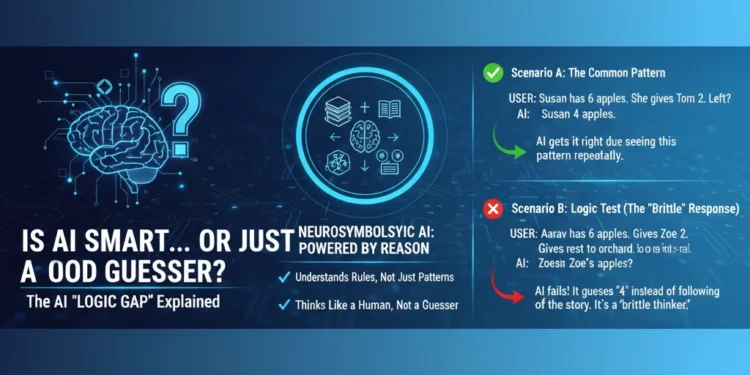

Most of us use Large Language Models (LLMs) like ChatGPT every day for everything from writing emails to coding. But here is a startling truth: a simple change—like swapping a name in a math problem—can cause an AI’s logic to completely collapse.

For example, if you ask an AI: “Susan has 6 apples and gives Tom 2; how many are left?” it will answer correctly. But if you change the names or add a tiny twist, the model often fails.

Why does this happen? Current AI models are built on statistical patterns, not the structural reasoning humans use. They are “brilliant mimics” but “brittle thinkers.” They don’t understand the rules of the world; they just know which word usually comes next.

You May Like-5 Ways Grok AI Deepfake Crisis Affects You

Integrating Human Logic to Enhance AI Models

Martha Lewis, Assistant Professor of Neurosymbolic AI and a 2024 MacGillavry Fellow at the Institute of Logic, Language and Computation (ILLC), is working to change this. Her mission is to move AI away from “guessing” and toward “reasoning.”

What is Neurosymbolic AI?

To make AI more reliable, Lewis is combining two different technologies:

Machine Learning: The “intuitive” side of AI that is great at recognizing patterns and language.

Symbolic AI: The “logical” side that follows strict rules and human-like structures.

By integrating human logic to enhance AI models, Lewis aims to create systems that can handle “new data”—situations the AI hasn’t seen in its training—without getting confused.

Opening the “Black Box” of AI

One of the biggest challenges in tech today is the AI Black Box. Even the developers of these models often don’t know exactly how an AI arrives at a specific answer.

Lewis and her team at the ILLC are developing methods to look inside these complex systems. “It’s very important to know how the models work to understand why it gives a certain response,” Lewis explains.

Benefits of an “Explainable” AI:

Trust: Users can see the logic behind a decision.

Safety: It prevents the AI from making logical errors in critical fields like medicine or law.

Reliability: The AI becomes a true partner that reasons rather than just echoing data.

You May Like – How Do You Look to This World with AI in 2050

The Future: AI That Thinks Like a Human

In 2024, as a MacGillavry Fellow, Lewis began building a dedicated research group to bridge the gap between human psychology and computer informatics. By working with experts in linguistics and psychology at LAB42, she is helping design the next generation of LLMs.

The future of technology isn’t just about having more data; it’s about having better logic.

Frequently Asked Questions about AI Logic

Q1: Why do Large Language Models (LLMs) struggle with simple logic? A: Most AI models are built on statistical patterns rather than true reasoning. While they are great at predicting the next word in a sentence, they often fail when a problem is phrased in a way they haven’t seen before. This is known as the “Novelty Gap.”

Q2: What does “integrating human logic to enhance AI models” mean? A: This refers to the field of Neurosymbolic AI, where researchers like Martha Lewis combine the pattern-recognition power of Machine Learning with the rule-based logic of Symbolic AI. This ensures the AI follows consistent rules, much like the human brain.

Q3: What is the “Black Box” in AI? A: The “Black Box” refers to the internal decision-making process of an AI model that is hidden from users and developers. Because these models are so complex, it is often impossible to see exactly how or why an AI arrived at a specific (and sometimes incorrect) answer.

Q4: How does Neurosymbolic AI improve ChatGPT and other models? A: By adding a layer of symbolic logic, AI becomes “theoretically grounded.” This means it doesn’t just guess based on probability; it understands the relationships between objects and rules, making it more reliable and less “brittle” when faced with new scenarios.

Q5: Who is Martha Lewis and what is her role at ILLC? A: Martha Lewis is an Assistant Professor of Neurosymbolic AI and a MacGillavry Fellow at the Institute of Logic, Language and Computation (ILLC). She leads a research group focused on making AI models more explainable and human-like in their reasoning.

For the reason that the admin of this site is working, no uncertainty very quickly it will be renowned, due to its quality contents.

Somebody essentially lend a hand to make significantly articles Id state That is the very first time I frequented your website page and up to now I surprised with the research you made to make this actual submit amazing Wonderful task

Very well presented. Every quote was awesome and thanks for sharing the content. Keep sharing and keep motivating others.

You’re so awesome! I don’t believe I have read a single thing like that before. So great to find someone with some original thoughts on this topic. Really.. thank you for starting this up. This website is something that is needed on the internet, someone with a little originality!

Concise and applicable to overseas operations

A really good blog and me back again.

This article was both informative and easy to follow. I liked how the explanations were detailed enough to be helpful without becoming overwhelming. The writing feels natural and well-paced, which keeps the reader engaged throughout.

dxd global | Marka yönetimi Kıbrıs , sosyal medya yönetimi, promosyon ürünleri, Seslendirme Hizmeti , SEO , Dijital pazarlama , Videografi

I truly appreciate your technique of writing a blog. I added it to my bookmark site list and will

Older Mario games new super mario deluxe

thisis a fantastic article, really nice created, i enjoy reading it, i will be back to check out for latest update, keep up the good work and applause. ng.yandaz.com